iSnap Demo

Welcome to the iSnap demo! iSnap is a data-driven, intelligent tutoring system for block-based programming, offering support such as hints, feedback and curated examples. iSnap extends Snap, an online, bock-based programming environment designed to make programming more powerful and accessible to novices.

iSnap is a project of the HINTS Lab at North Carolina State University, in collaboration with the Game2Learn Lab. A public mirror of iSnap is available on GitHub.

The iSnap project has also produced various papers and public datasets, which can be found below.

The construction of a Snap program and the corresponding evaluation.

iSnap is combines a number of systems and support features, which you read about below:

Data-driven Hints

Try the SourceCheck Hints demo

Using data collected from real students working on programming assignments, we are able to generate on-demand, next-step hints for students who get stuck on these assignments. The SourceCheck algorithm matches students' code to previously observed code from students who successfully completed the assignment and recommends an edit based on how those students progressed.

See an explanation of iSnap's help features below, or try them out yourself with this demo. Select any assignment and test out the hints.

When a student needs help, they can ask iSnap to check their work. To start off, it shows two colors:

- Blocks that are highlighted in magenta probably don't belong in a solution.

- Blocks that are highlighted in yellow probably do belong in the solution, but may not be in the right place.

If a student requests a next-step hint, iSnap also adds:

- Blue + buttons and input outlines indicating where new blocks can be inserted

Previous Version of iSnap Hints

The above demo shows off iSnap's newest hint interface, but much of the earlier research with iSnap used a simpler hint interface, based on the Contextual Tree Decomposition (CTD) algorithm.

When a student is stuck, they can request a hint with the click of a button.

Students can request hints about whole scripts or individual blocks.

iSnap Hints Publications

For more information on the data-driven algorithm that powers iSnap, see:

Price, T. W., R. Zhi and T. Barnes. "Evaluation of a Data-driven Feedback Algorithm for Open-ended Programming." International Conference on Educational Data Mining. 2017. [Paper | Slides]

Price, T. W., Dong, T. and Barnes, T. "Generating Data-driven Hints for Open-ended Programming." International Conference on Educational Data Mining. 2016. [Paper | Slides]

For more information on iSnap and its initial pilot evaluation, see:

Price, T. W., Y. Dong and D. Lipovac. "iSnap: Towards Intelligent Tutoring in Novice Programming Environments." ACM Special Interest Group on Computer Science Education (SIGCSE). 2017. [Paper | Slides]

Price, T. W., Z. Liu, V. Cateté and T. Barnes. "Factors Influencing Students’ Help-Seeking Behavior while Programming with Human and Computer Tutors." International Computing Education Research (ICER) Conference. 2017. [Paper | Slides]

Price, T. W., R. Zhi and T. Barnes. "Hint Generation Under Uncertainty: The Effect of Hint Quality on Help-Seeking Behavior." International Conference on Artificial Intelligence in Education. 2017. [Paper | Slides]

Price, T. W., R. Zhi, Y. Dong, N. Lytle and T. Barnes. "The Impact of Data Quantity and Source on the Quality of Data-driven Hints for Programming." International Conference on Artificial Intelligence in Education. 2018, forthcoming.

Adaptive Immediate Feedback (AIF)

Try the AIF demo

Programming can be quite challenging, and for novices it can be filled with negative self-assessments (e.g. "I'm not cut out for this."). What students often fail to realize is that they are making progress, they just can't see it yet.

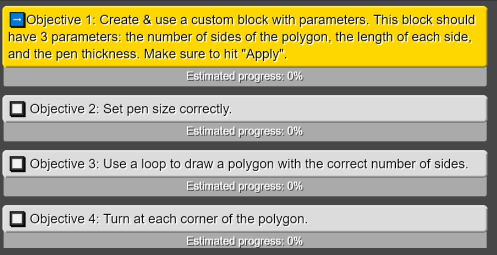

The AIF system addresses this in part by breaking the problem down into subgoals that are more manageable than an entire problem.

AIF also provides students with real-time feedback on their progress on programming assignments, using a hybrid data-driven progress assessment algorithm. As students work, AIF monitors their progress, displaying it as a progress bar. It can even detect progress that's out of order, e.g. not yet in the correct procedure.

When students make a mistake, they can see that immediately, as the progress bar decreases.

AIF also pops up encouraging messages, both when students progress, and also when they fail to make progress for a while.

AIF Publications

- S. Marwan, Y. Shi, I. Menezes, M. Chi, T. Barnes, T. W. Price, “Just a Few Expert Constraints Can Help: Humanizing Data-Driven Subgoal Detection for Novice Programming”. Proceedings of the International Conference on Educational Data Mining (EDM) 2021. (Acceptance Rate 22.0%, 22/100 Full Papers, Best Full Paper Award). [Paper | Video Presentation]

- S. Marwan, G. Gao, S. Fisk, T.W. Price, and T. Barnes. “Adaptive Immediate Feedback Can Improve Novice Programming Engagement and Intention to Persist in Computer Science”. In the sixteenth annual ACM International Computing Education Research (ICER), 2020. (22.7% acceptance rate; 27/119 full papers). [Paper | Video Presentation]

Example Helper

Try the Example Helper demo

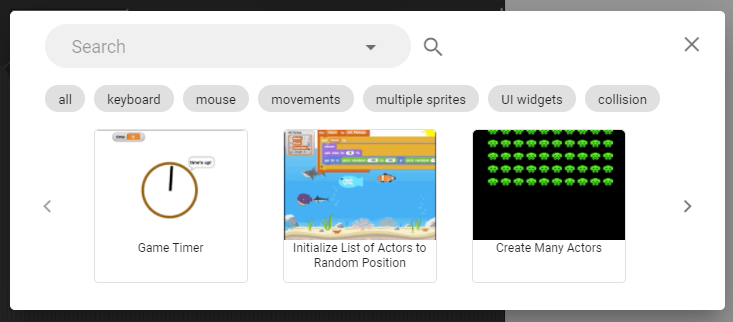

Example Helper is an code example gallery to support open-ended programming, where students design and implement projects connected to their own interested. Because students design these programs and specify their own goals, it is difficult to support them with hints and feedback. Example Helper addresses this by offering a curated gallery of code examples, derived from prior students' projects.

Using Example Helper, a student can find an example by browsing through the gallery, or filtering and search for examples by clicking on a tag, or querying in a search box.

After finding a needed example, the student can preview it to run, edit and tinker with the code. They are encouraged to reading the code and relate it to its output, and write a self-explanation.

Example Helper Publications

- W. Wang, A. Kwatra, J. Skripchuk, N. Gomes, A. Milliken, C. Martens, T. Barnes, T. Price, “Novices’ Learning Barriers When Using Code Examples in Open-Ended Programming”. ITiCSE’21 – Proceedings of the 2021 ACM Conference on Innovation and Technology in Computer Science Education. [Paper]

Debugging Support

View a demo of the self-explainable debugging

One of the most challenging tasks for novices is understanding why their code isn't working the way it's expected. iSnap offers a debugging visualization (currently in development) that helps students understand the execution of visual programs.

After running a script, students can hover over any block in their code to see what it did during execution.

When the student hovers over the outer loop in this code, they see all the movement the sprite made as a part of each iteration of the loop.

Hovering over the inner loop shows the same movement, but broken down into the smaller steps of the inner loop.

Hovering over a variable-related block shows how the variable's value changed as the script executed and the sprite moved.

Logging

View a demo of the logging output

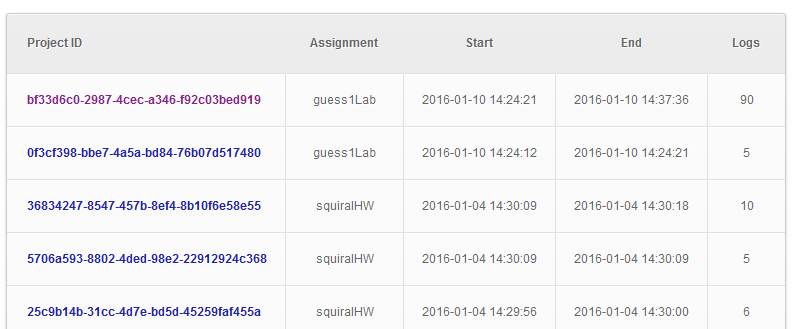

iSnap logs all actions taken by students in the environment, as well as snapshots of

students' code as they work. Logs can be saved to a database for future analysis, review

or grading. To get a quick overview of the programs that have been created on this demo

site, check out the viewer page.

Note: this is a demo site and does not include actual student data.

iSnap offers a basic interface to navigate and view the logs it generates.

Dataset

The iSnap project has also produced public datasets, consisting of log data from students working in an introductory computing class, which can be found on the PSLC Datashop.